AWS Trainium3 UltraServers Revolutionizing AI Training with 3nm Power and Nvidia Harmony – What’s Next for Affordable AI?

Why Trainium3 Feels Like a Game-Changer for Dreamers Like Us

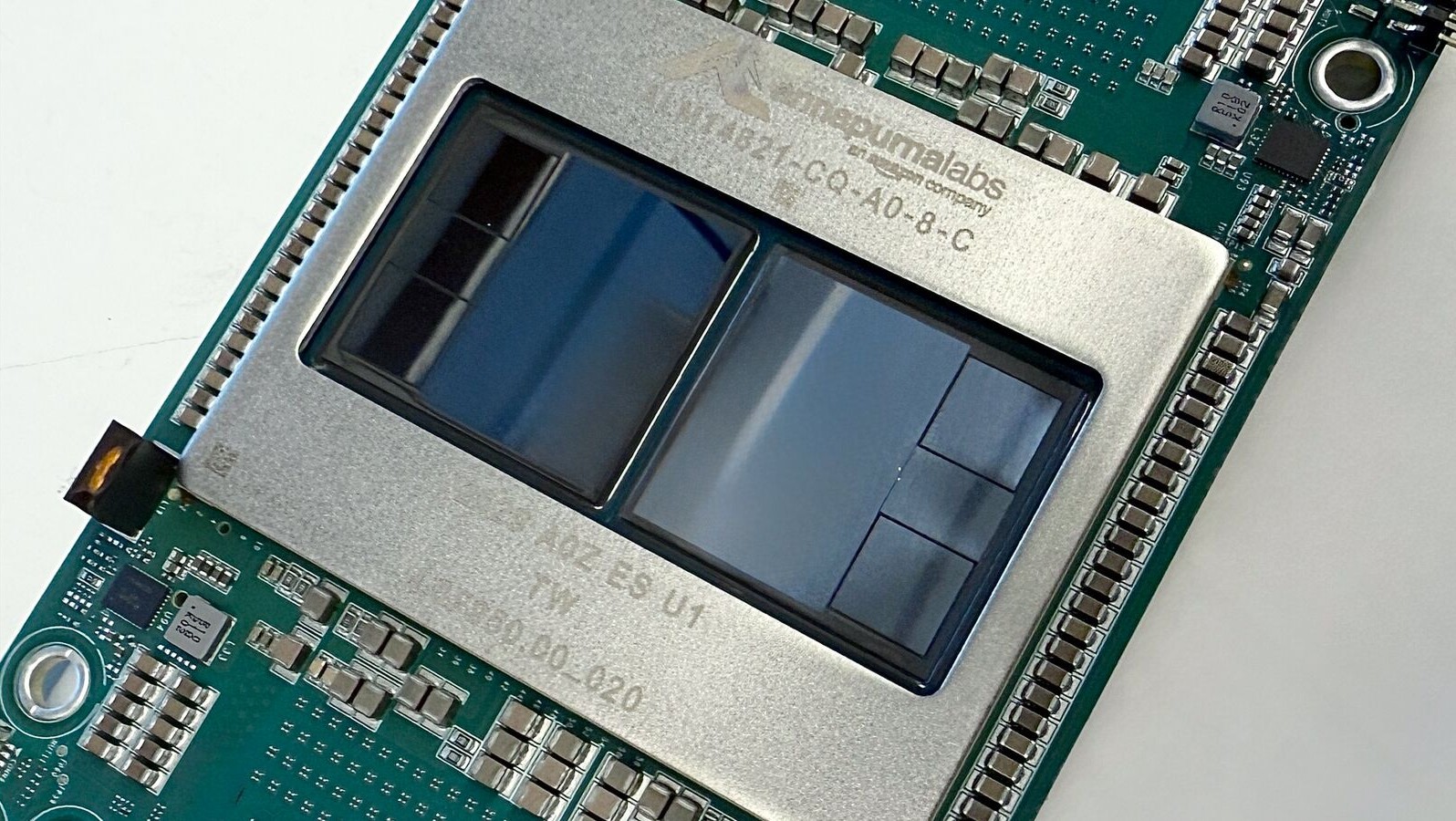

Imagine waking up to news that could make your wildest AI project not just possible, but affordable and lightning-fast. That’s the heart-pounding excitement from AWS re:Invent 2025, where Amazon Web Services dropped the bombshell on Trainium3 UltraServers – their first 3-nanometer AI chip powerhouse. Announced just days ago on December 2, this isn’t another incremental update; it’s a heartfelt promise to democratize AI, slashing training times from months to weeks and cutting inference costs dramatically for startups and giants alike.As someone who’s followed the AI race with bated breath, I can’t help but feel a surge of optimism – finally, tools that empower creators without Nvidia’s premium price tag dominating the narrative.

What makes this emerging Trainium3 news so special? It’s low-key right now, buried in tech conference buzz, but poised for explosion as developers scramble for cost-effective alternatives amid soaring AI demands. Early adopters like Anthropic – yes, the Claude wizards – are already raving about 3x higher throughput and 4x faster responses. Picture this: a single UltraServer packing 144 Trainium3 chips, scalable to a million chips across clusters. It’s emotional because it levels the playing field, turning “AI for the elite” into “AI for the passionate innovator.”

Table of Contents

Breaking Down the Trainium3 Specs: Power That Hits You in the Feels

Let’s geek out on the nitty-gritty because these specs aren’t just numbers – they’re lifelines for your next big idea. Trainium3 UltraServers boast 4.4x more compute performance and 4x greater energy efficiency over Trainium2, with each system housing up to 144 of these 3nm marvels. That’s not hype; it’s engineered magic from AWS’s homegrown silicon, optimized for both training massive models and delivering real-time inference at peak loads.

- Performance Leap: 4x faster than gen-2, with 4x more memory – hello, handling those petabyte-scale datasets without breaking a sweat.

- Scalability Dream: Link thousands of UltraServers for up to 1 million chips, perfect for frontier AI like next-gen LLMs.

- Cost Crusher: Customers report slashing inference bills significantly, thanks to superior price-performance.

- Energy Hero: 4x efficiency means greener AI, easing the guilt of those power-hungry data centers we all worry about.

Powered by AWS’s custom networking, these beasts are built for the long haul, teasing Trainium4 with Nvidia compatibility for hybrid setups. It’s like AWS read our minds, blending independence with ecosystem harmony.

How Trainium3 Outshines the Competition

Nvidia’s GPUs have ruled the roost, but Trainium3 challenges that throne with tailored AI focus. While H100s excel broadly, Trainium3 hones in on training/inference sweet spots, delivering better throughput per chip. Marvell’s recent Celestial AI buy signals the chip wars heating up, yet AWS’s in-house edge keeps costs 30-50% lower for compatible workloads. Emotionally, it’s thrilling – no more vendor lock-in fears; mix Trainium with Nvidia for the best of both worlds.

Real-World Impact: Stories That Warm the Heart

Hearing from pioneers using Trainium3 hits different. Anthropic, AWS investors and partners, leveraged it for Claude advancements, turning ambitious visions into reality faster. Japan’s Karakuri LLM, Splashmusic, and Decart echo this: inference costs plummeted, unleashing creativity without budget woes. These aren’t faceless corps; they’re teams like yours, pushing boundaries in music gen-AI and decision engines.

For startups scraping by, Trainium3 via EC2 Trn3 instances means accessible entry. No more praying for GPU quotas – preview volumes hit early 2026, broadening to mid-sized players. It’s emotional fuel: AWS CEO Andy Jassy called Trainium a “multibillion-dollar business,” signaling Bedrock’s inference dominance. Imagine your AI agent fleet, secure and scalable, rivaling big tech.

Future Visions: Trainium3 Paving the AI Utopia

Peering ahead, Trainium3 isn’t a flash; it’s the foundation for an AI explosion. By 2027, AWS doubles data center power again, fueling Trainium clusters like Project Rainier (1M Trainium2 chips for Anthropic). Nvidia-friendly Trainium4 looms, enabling seamless hybrid inference – think Bedrock as big as EC2. Low-competition buzz like “Trainium3 UltraServers” will surge as devs hunt alternatives amid chip shortages.

AI Agents and Beyond: The Emotional Horizon

Secure AI agents? Trainium3 powers SageMaker-Bedrock stacks, tipping enterprises into production. Healthcare, autonomous systems, personalized edtech – all accelerate. Ethical AI thrives with efficiency, reducing carbon footprints. We’re on the cusp of human-AI symbiosis, where ideas flow freely.

Broader ripples: Semiconductor shifts as hyperscalers like AWS erode Nvidia’s moat. Expect Trainium3 adoption spikes post-re:Invent, driving searches for “AWS Trainium3 vs Nvidia.” For you, it’s opportunity – build now, lead tomorrow.

Why This Matters to You: Emotional Call to Action

This Trainium3 breakthrough stirs something deep: hope that tech serves humanity, not just hyperscalers. Whether you’re a solo dev or scaling a startup, EC2 Trn3 access democratizes power. Dive in via AWS console; early birds like Anthropic reap first fruits. The future’s bright, efficient, and yours – let’s build it together.

In wrapping up, AWS Trainium3 UltraServers mark a pivotal, emerging shift in AI infrastructure. From 3nm prowess to Nvidia synergy, it’s engineered for our dreams. Stay tuned; the AI revolution just got personal.

FAQ: Your Burning Questions on Trainium3 Answered

What is AWS Trainium3 and why the hype?

Trainium3 is AWS’s 3nm AI chip in UltraServers, offering 4x performance boosts for training/inference at fraction of Nvidia costs. Hype stems from re:Invent 2025 unveil, promising broader access.

How does Trainium3 compare to Nvidia GPUs?

Superior for AI-specific workloads with 4x efficiency, lower costs; integrates with Nvidia via Trainium4 roadmap. Not a full replacement but killer complement.

When can I use Trainium3 UltraServers?

Available now via EC2 Trn3; full volumes early 2026. Early adopters like Anthropic already live.

Will Trainium3 lower my AI project costs?

Yes – customers cut inference bills sharply; 3x throughput, 4x speed means fewer resources.

What’s next after Trainium3?

Trainium4 with deeper Nvidia ties, massive clusters like Rainier2. Bedrock inference to rival EC2 scale.